KANBrief 3/16

The development of self-driving vehicles continues apace, but raises a number of questions. Who is liable in the event of an accident? Can and should self-driving vehicles take ethical decisions in order to avoid accidents? Standards developers are already strongly involved in this area and are setting out the technical terms of reference for the new developments.

With regard to accident avoidance, it must first be noted that current systems are not yet sufficiently accurate to register a situation with a precision of 99.999%, and are not therefore an adequate basis for a decision to perform an avoidance manoeuvre. It may be possible for certain rules to be implemented by the technology. It remains unclear however whether such algorithms would be acceptable to society and for that matter to the purchaser of the vehicle, who may have a different view of such ethical aspects.

The assured reliability raises the question of the required level of system redundancy. Must multiple redundancy be implemented for each electronic element in the vehicle, as is the case in aircraft design? Who monitors the individual modules? How much time must passengers be given to intervene in the event of an emergency? (The timeframe may extend from a few seconds to several minutes.) During this transitional period, a system would have to continue to function safely in the presence of any conceivable fault. Where a vehicle lacks a steering wheel, the solution is even more difficult, since the passengers would no longer have any means of intervention. Tests are already being performed in this area with redundant systems on all levels. Each algorithm could for example be computed in parallel several times and the results compared. Network and power lines could also be engineered with redundancy.

What systems should be approved for homologation, and how can they be tested adequately? Many methods that have been trained by mechanized learning (for example the detection of pedestrians) cannot be verified by formal logic, but only statistically with the use of large volumes of test data. How many test kilometres must a vehicle then complete before it can be considered safe, and what happens when a component in the system receives a software update? Would all test runs then have to be repeated in full? Are the associated costs acceptable, or can they be reduced by the use of simulation?

Another question is that of the computing architecture in self-driving vehicles. Will autonomous vehicles be engineered by numerous mini-assistants networked together, each of which fulfils only a sub-task, as is currently the case in vehicle design? Or is comprehensive detection of the environment in any given situation required, performed by a powerful central processor that takes all decisions?

With regard to the infrastructure, it must be clarified whether research should have the objective of vehicles being driven in the same way as by human drivers, or whether the infrastructure should be extended in order for certain problems to be circumvented. For example, traffic lights can probably not be detected by cameras alone; the traffic light would have to communicate with the vehicle. On what scale is modification to the infrastructure acceptable both economically and socially? Will human beings have to be screened from autonomous road traffic by barriers (and separation by levels), as is the case with many highly automated underground rail systems?

How much freedom to make decisions are we willing to yield to the infrastructure? Vehicles are already able to communicate with other vehicles, or can be prevented from moving off by a central control system. This in turn raises questions of data privacy, since each metre travelled can be monitored externally. The exposure of highly automated systems to external attack, for example over the Internet or from jamming transmitters, is highly topical.

From an occupational safety and health perspective, it is important for drivers to be adequately familiarized with how (partly) automated vehicles work. It must also be clarified how employers are to perform a comprehensive risk assessment: what secondary tasks (such as the operation of equipment, scheduling tasks) drivers are permitted to perform whilst driving is already an issue, for example.

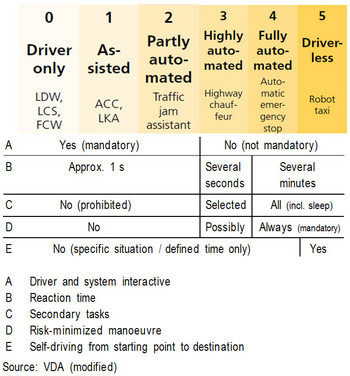

| Degree of automation:* | 0-2 | 3 | 4-5 |

|---|---|---|---|

| Driver and system interactive | Yes (mandatory) | No (not mandatory) | |

| Reaction time | Approx. 1 S. | Several seconds | Several minutes |

| Secondary tasks | No (prohibited) | Selected | All |

| Risk-minimized manoeuvre | No | Possibly | Always (mandatory) |

| Self-driving | No (specific situation/ defined time only) | Yes | |

*Legend Degree of automation:

0, Driver only: lane Departure Warning (LDW), Lane Change System (LCS), Forward Collision Warning (FCW)

1, Assisted : adaptive Cruise Control (ACC), Lane keeping Assistant (LKA)

2, Partly automated: traffic jam assistant

3, Highly automated: highway chauffeur

4, Fully automated: automated emergency stop

5, Driverless: robot taxi

Professor Dr Daniel Göhring

daniel.goehring@fu-berlin.de

Standards are already being developed on a number of levels that are beginning to address the subject of self-driving cars. These range from provisions concerning the different levels of automation and the terminology (SAE J3016), to advanced driver assistance systems (ISO TC 204/WG 14) for which a number of standards on levels 0 and 1 (e.g. lane departure warning systems (LDW) autonomous cruise control (ACC), automatic paring systems, etc.) have already been published. WG 14 is currently working on standars for level 2, such as partially automated driving within a lane.

ISO TC 22/SC 39 (Road vehicles/Eronomics) is concerned with the human factor in level 3 systems, in consideration of a number of research projects. In ISO TC 204 and CEN TC 278/ETSI IST, substantial work on the subject of networking has been in progress for many years under the headings of „intelligent transport systems“ and „Cooperative systems“.

Eric Wern (DIN NA Automobiltechnik), wern@vda.de